From Art to Assets: Building a High-Velocity Generative AI Workflow

The aesthetic fascination with generative AI often masks a harsh operational reality: high-art experimentation does not equal high-volume publishing. For creative teams tasked with filling a daily social calendar or a relentless blog cycle, the "prompt-and-pray" method is a productivity killer. When you are waiting sixty seconds for a high-fidelity model to render an image that might—or might not—include the right number of fingers, you aren't just losing time; you are losing the momentum required for iterative creative work.

The transition from AI as a "toy" to AI as a "tool" requires a fundamental shift in how we build visual assets. Content teams shouldn't be chasing the perfect prompt for hours. Instead, they need a modular assembly line. This involves using lightweight models for rapid drafting and dedicated editing environments to fix specific failures without starting from scratch. By adopting a tiered workflow, creators can prioritize speed where it matters and precision where it’s necessary.

The Efficiency Wall in Generative Asset Production

Most content teams hit a wall when they try to use top-tier, heavy foundation models for every single asset. These models are impressive, but they are often over-engineered for a simple LinkedIn header or a background for a YouTube thumbnail. The hidden cost of "re-rolling" prompts in these heavy environments is significant. If a generator takes a minute to produce four options and none of them fit the brand guidelines, that’s a minute of pure downtime for the operator. Multiply that by twenty iterations, and a single social graphic has cost a designer a third of their morning.

This inefficiency stems from the "one-shot" myth—the idea that if you just write a clever enough prompt, the AI will deliver a finished, perfect product. In reality, professional publishing demands consistency and control. When the goal shifts from "making art" to "producing scalable visual assets," the priority becomes the "time-to-edit" rather than the "time-to-prompt." We need systems that allow for quick failures so we can get to the usable frame faster.

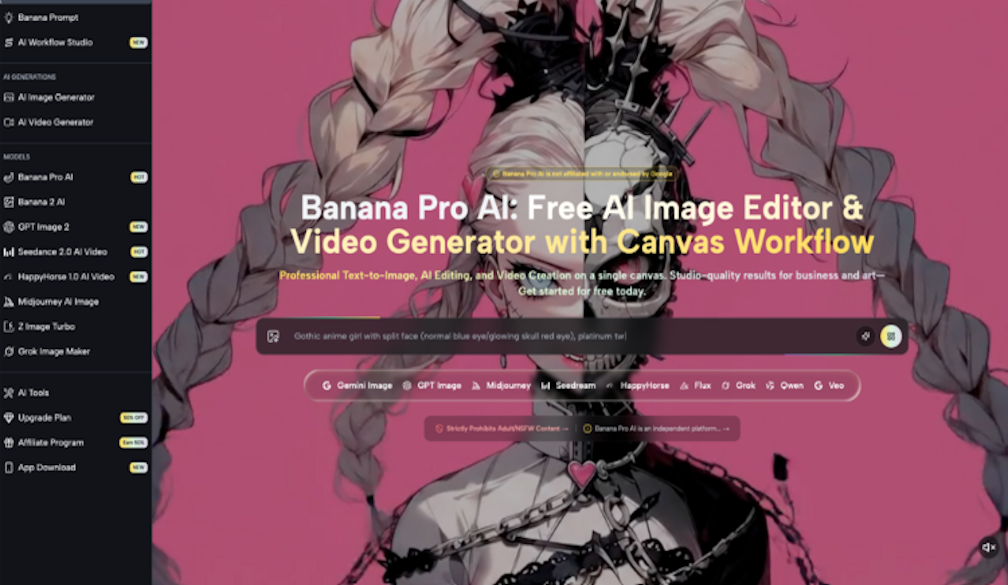

Drafting at the Speed of Social: The Case for Nano Banana

High-velocity environments like social media management require a "good enough" frame delivered instantly. This is where lightweight models, such as Nano Banana, become the backbone of the production stack. Unlike their heavier counterparts, these models are optimized for latency. They are designed to give the creator a visual baseline in seconds, allowing for a rapid-fire iterative loop.

When a creator is working on internal deck visuals or a series of rapid-response social posts, the nuances of ultra-high-resolution textures are less important than the general composition and mood. Using Nano Banana allows a team to generate dozens of concepts in the time it would take a larger model to produce one. This doesn't just save time; it changes the creative process. It encourages exploration because the cost of a "bad" generation is virtually zero. You aren't committed to a slow render, so you are free to pivot your visual strategy mid-stream.

However, we should be clear about the trade-offs. While speed is the primary benefit here, Nano Banana Pro models usually sacrifice some of the complex prompt-adherence found in multi-billion parameter models. If you need a hyper-specific, multi-subject scene with intricate lighting interactions, a lightweight model might struggle. The goal of this tier isn't perfection; it's the efficient creation of a foundation.

The Surgical Pivot: Refinement over Replacement

One of the biggest mistakes in AI workflows is hitting "regenerate" when an image is 90% perfect. If the composition is right but the hand is distorted or a logo is placed awkwardly, the solution isn't a new prompt—it's an AI Image Editor. A canvas-based editor allows an operator to intervene manually, using in-painting or localized adjustments to fix errors.

From an operational standpoint, spending thirty seconds on a canvas correction is infinitely more efficient than spending ten minutes in a prompt-refinement loop. This surgical approach treats the AI output as a starting point rather than a final destination. By using the AI Image Editor as a bridge, teams can take a low-fidelity draft from a fast model and polish it into a brand-ready asset. This is where the Banana AI ecosystem proves its worth: it acknowledges that the human creator is still the final arbiter of quality.

This workflow reduces the frustration that often leads to "AI burnout." Instead of fighting the model to get a specific result, the operator uses the tool to get close, then uses the editor to get home. It’s the difference between trying to describe a haircut to someone over the phone versus just showing them where to snip with the scissors.

Workflow Comparison: Integrated Platforms vs. Disconnected Tooling

A significant source of friction for creative teams is the "context-switching" tax. Generating an image in one tab, downloading it, uploading it to a traditional design suite like Photoshop or Canva, realizing it needs a minor tweak, and then heading back to the generator is a fragmented and slow process. This is why Banana Pro and similar integrated platforms have gained traction.

By keeping the generation and the editing in the same environment, the feedback loop remains tight. An operator can generate an image and immediately pull it into a canvas workflow for cleanup. This integration also has an economic component. Heavy models often consume more credits or compute power. By utilizing a high-frequency output on a lighter model for the initial concepting and then switching to a "Pro" model only for the final "hero" assets, teams can stretch their production budgets much further.

The "operator" role is evolving. We are moving away from being "prompt engineers"—a term that already feels dated—and toward being asset curators. The value lies in knowing which tool in the stack to use for which task. You don't use a sledgehammer to hang a picture frame, and you shouldn't use a heavy foundation model to generate a simple blog icon.

The Limits of the Nano Approach: What We Cannot Conclude Yet

It is important to reset expectations regarding these lightweight workflows. Despite the efficiency gains, "Nano" models still face significant hurdles. For instance, they often struggle with high-entropy text—meaning that if you need specific, legible words within an image, these models will likely fail without heavy manual intervention. We also cannot yet conclude that these automated pipelines can maintain long-term stylistic consistency across months of content without the use of custom LoRA (Low-Rank Adaptation) training.

Furthermore, there is a lingering uncertainty about automated quality control. While we can speed up the generation and editing, we still lack a truly reliable "set and forget" layer that can judge if an image meets brand standards without a human eye. Every "high-velocity" stack still requires a human operator to catch the uncanny valley glitches that models—especially faster, smaller ones—are prone to producing. We are in a transitional phase where the tools are fast, but the oversight remains manual and cognitively demanding.

Implementing a Tiered Visual Strategy

For creative leads looking to restructure their media production, the first step is establishing a "Model Tier" checklist. This prevents the team from defaulting to the most expensive or slowest tool for every task. A sample workflow might look like this:

- Tier 1 (The Draft): Use Nano Banana for rapid brainstorming, layout testing, and "vibe" checks. The goal here is a sub-5-second turnaround.

- Tier 2 (The Refinement): Once a composition is selected, use the AI Image Editor to fix anatomical errors, adjust cropping, or swap out background elements.

- Tier 3 (The Hero): Only for high-stakes assets—like a website homepage or a print ad—should the team move to a high-fidelity model or a "Pro" level render.

By setting internal benchmarks for time-to-output, teams can measure the actual ROI of their AI stack. If switching to a faster model like Nano Banana Pro reduces the time spent on social graphics by 40%, that is a quantifiable win that outweighs any minor dip in initial pixel clarity.

Ultimately, the goal of a high-velocity content stack is to remove the "magic" from the process and replace it with predictable engineering. When the tools are integrated and the tiers are defined, the creative team can stop worrying about how the AI works and start focusing on what they are actually trying to communicate. Speed is not just about being fast; it’s about having the room to be better through more frequent iteration.